The use of Artificial Intelligence (AI) in finance promises to help solve many long-standing barriers to financial inclusion. The formal financial sector has been beyond reach for low-income users and remotely located users in India for numerous reasons. As we battle a global pandemic which could further isolate people excluded from formal finance or those who are geographically dispersed, any methods that can assist in overcoming these barriers become even more critical to understand. Some of the most enduring barriers to accessing formal financial services for low-income users include a lack of formal records of income and expenditure, of proper credit history, or the relatively high unit cost to provide services at the last mile (Kemp, 2017).

Many are now looking to AI techniques to assist providers to engage with low-income users to assess their creditworthiness, repayment capacity and create tailored credit products (Experian, 2019) The Union Budget 2020-21 pushed for the proliferation of AI use in India as the next step in India’s digital revolution (ETTelecom, 2020). This comes in the backdrop of the Indian FinTech ecosystems rapidly adopting AI in various aspects of their processes, from customer acquisition to transaction management. Despite these developments, the Indian policy discourse on consumer protection considerations when using AI in finance remains limited. This blogpost seeks to begin that conversation. It introduces the term AI and describes some applications in the Indian digital credit sector, followed by an overview of consumer protection concerns emerging from these applications of AI in finance in India.

1. What is Artificial Intelligence?

In 1956, a group of experts at the Dartmouth Summer Research Project convened to assess if it were possible to make a machine simulate every aspect of human intelligence and learning. They coined the term “Artificial Intelligence” (AI) referring to machines that can “use language, form abstractions and concepts, solve kinds of problems now reserved for humans, and improve themselves” (McCarthy et al., 1955).

More recently, the Financial Stability Board’s (FSB) definition conceptualised AI as IT systems that can display intelligence to ask questions, discover and test hypotheses, and make decisions automatically based on advanced analytics by operating on extensive data sets (Financial Stability Board, 2017).

These definitions reveal the wide range of applications and processes that can be encompassed within the term AI. A useful categorisation of AI that aids this analysis is into three types based on the degree of intelligence it can display:

a. Artificial Super Intelligence, where computers have surpassed human intelligence in all domains.

b. Artificial General Intelligence, where computers are as intelligent as humans in all domains.

c. Artificial Narrow Intelligence (ANI), where computers can show human-like intelligence in narrow domains defined by a human programmer (Marda, 2018).

Most of the AI that is in use today demonstrates narrow intelligence where the algorithms[1] use different computing and statistical methods to intuitively detect and learn patterns in training data and enhance their own performance. This includes machine-learning (ML) algorithms which use large amounts of training data to recognise patterns (Surden, 2014), and more recent developments like deep learning and natural language processing that are based on similar but more complex pattern recognition, programming and automation methods (Taulli, 2019).

Given this landscape and the current state of play, the AI mentioned in this post largely relate to artificial narrow intelligence.

2. Artificial intelligence and credit in India

Indian FinTechs have quickly adopted AI algorithms in their business processes. Many FinTech providers like Capital Float, Flexiloans and Lendingkart are using AI in their user-facing activities for a variety of purposes including risk analysis, credit underwriting, providing wealth management advice and fraud detection (Agarwal, 2019a).

Our review of digital credit lenders revealed that many digital credit firms in particular claim to use AI algorithms for risk-modelling and credit underwriting decisions. This review is based on our analysis of websites, news reports and research reports relating to the use of AI in lending in India. Within the Indian landscape, these news reports and articles suggest that FinTech providers in India appear to be using AI in their credit lending processes to:

- process different kinds of data points such as credit bureau records, bank account records, social media activity and public records to assess users’ creditworthiness more quickly (Vishav, 2019; Taylor, 2019);

- preempt defaulters by analysing a variety of data points like income levels, demographics, credit history, payment history, usage patterns et cetera (WNS);

- assess the risk of default and likelihood of fraud (Vishav, 2019);

- automate and speed-up loan application processes (Vishav, 2019; Taylor, 2019);

- standardise loan decisioning processes (Taylor, 2019);

- provide personal wealth management services, improving credit health and improving savings (Sarmah, 2019);

- undertake fraud detection and prevention, and anti-money laundering monitoring (Mirjankar, 2020);

- deliver personalised banking and digital marketing (Mirjankar, 2020); and

- assist consumers through Robo-advisory services (Mirjankar, 2020).

In particular, there is a visible number of Indian FinTech startups using AI for in their lending[2] processes (Agarwal, 2019a). These firms, as reviewed by Agarwal (2019a), each appear to use AI to meet different objectives:

- mPokket: mPokket provides credit exclusively to students above the age of 18 years (mPokket, 2018). mPokket uses AI to process thousands of demographic, social, behavioural, financial and transactional data points to assess students’ creditworthiness. It also uses AI in its customer on-boarding, customer interaction and debt collection processes.

- Paisadukan: Paisadukan is a P2P lending platform that provides credit to rural user segments. It uses AI for credit assessments for rural users who may not have sufficient documents for traditional credit scoring. Paisadukan also uses AI to assist lenders in their credit decisioning processes.

- Shubh Loans: Shubh Loans provides credit to users who are presently excluded from the formal credit system in India (Shubh Loans, 2019). Shubh Loans uses AI to process large amounts of non-traditional data points on unserved and underserved users for credit-scoring and lending.

- Capital Float: Capital Float uses AI for risk assessment, credit decisioning, collections, pre-empting bad loans and marketing. It also uses AI for analysing expenditure behaviour to provide personal wealth management services and lend credit through its mobile-based application, Walnut (Yadav, 2020).

- Creditmate: Creditmate uses AI to learn from the knowledge that traditional collections agents possess. The AI system will use these insights to recommend efficient collection strategies that can reduce bad debts and NPAs. Creditmate is also using AI to score debt defaulters and manage debt resolution processes (Agarwal, 2019b).

- Flexiloans: Flexiloans uses advanced data processing technology that can analyse speech and process large amounts of data points to inform better lending decisions. With the help of AI, Flexiloans seeks to create a fully automated lending platform that can more efficiently onboard customers, detect anomalies, identify frauds and eliminate human bias in the lending process.

- Lendingkart: Lendingkart uses AI to process ten thousand data points for credit-scoring, debt collection, predicting and addressing risky loans before default.

However, the use of AI is not the preserve of start-ups alone. Incumbent banks are also noted to be using AI in their processes. For instance, State Bank of India (SBI), HDFC Bank, ICICI Bank and Axis Bank have launched AI-driven chatbots to assist users with their enquiries about banking products and services, as well as assist in conducting a variety of transactions (Baruah, 2020; Chitra 2019). The chatbots have been trained using large sets of customers enquires from the past, and in SBI’s case are reported to be processing 864 million enquiries every day (Baruah, 2020).

Many fintechs and banks assert that using AI for these purposes has allowed them to serve consumers who lack a formal credit history and consumers who have been excluded from the formal financial system (Vishav, 2019).

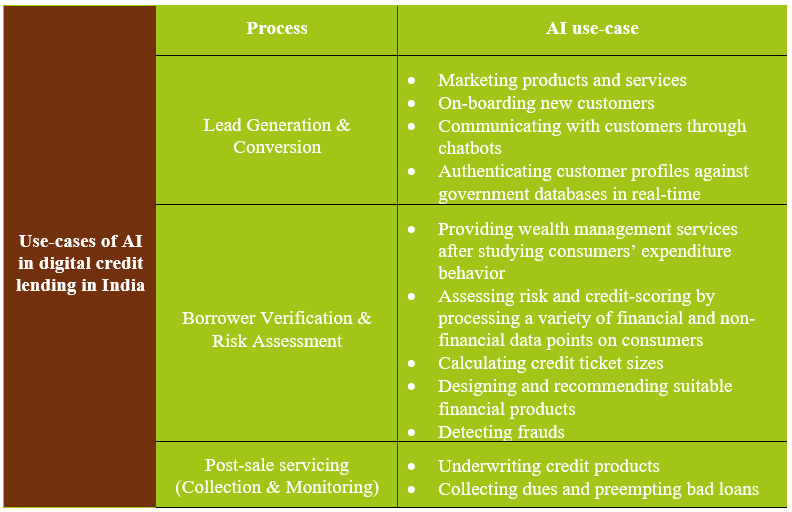

This review of the Indian digital credit market reveals that AI-enabled processes are now being used across the chain of traditional credit delivery. While doing so, these methods are also able to add value in new ways for providers and consumers. Based on publicly available information and insights from our Digital Credit Roundtable on the process of digital lending (Dvara Research, 2017), we represent the AI deployment in India across the chain of lending-related processes in Table 1.

Table 1: Use-cases for AI algorithms in digital credit lending in India

Sources: (Dvara Research, 2017; mPokket, 2018; Agarwal, 2019a; Agarwal, 2019b; Sarmah, 2019; Taylor, 2019; Vishav, 2019 Chitra, 2019; Shubh Loans, 2019; WNS; Baruah, 2020; Mirjankar, 2020; Walker, 2020; Yadav, 2020).

Credit providers are processing a wide variety of data points, including personal information, text messages, online and social media activity, expenditure and consumption patterns of users for the purposes as mentioned in Table 1.

These developments could play an important role in expanding access to credit, and to finance more widely, for more individuals. Meanwhile, the nature of these processes and the intensity of the personal information that they use can also create new consumer protection concerns that must be addressed.

3. User protection considerations from the use of AI in finance

The ability of an AI-based system to process large amounts of data rapidly at scale differentiates it from traditional data processing technologies. Financial sector entities have been using technology to process users’ personal data for a variety of purposes. However, as the use of AI in finance scales, there could be some unintended risks and harms that the technology poses to users. These AI systems could unintentionally also harm segments of the population who may be traditionally disadvantaged or vulnerable (Singh, Raghavan, & Chugh, 2019).

It is important to take note of these concerns to ensure that the benefits of AI can be harnessed without perpetuating user harms experienced in traditional financial systems. For this, it is crucial for AI technology developers, providers and policymakers to be cognisant about the ways in which AI systems can create and perpetuate risks for consumers.

For instance, in data-intensive AI-driven systems, the lack of particular kinds of data about a user can lead to incorrect assessments. This was seen in the case of IBM’s supercomputer, Watson. Watson was used for providing recommendations for treating cancer patients. Watson provided incorrect, and sometimes fatal, cancer treatment solutions for certain patient conditions because it was trained using hypothetical data (Chen, 2018). AI systems must be trained using good quality of data for them to perform well. AI systems can commit similar errors as Watson in the absence of proper data. Using algorithmic models that are trained on incorrect, incomplete or hypothetical data in digital credit can lead to mis-sale which can push users into over-indebtedness (Financial Stability Board, 2017; The Deloitte Centre for Regulatory Strategy, 2018).

Further, AI-based systems can perpetuate biases present in data but do so at scale, without traceability and often with the impression that there is no bias given the automated nature of the system. For instance, a recent case that involved gender discrimination arising from automated credit decisions was discovered in the Goldman Sachs-Apple credit card’s processes. The issuer sanctioned a significantly lower credit limit to a wife when compared to the credit limit offered to her husband. This occurred even where both the individuals filed joint tax returns and where the wife had a better credit score than her husband. Concerns were raised that the machine learning algorithm, in this case, was unintentionally ascribing female applicants lower credit limits (Natrajan & Nasiripour, 2019).

Another example of risks from AI systems is in the creation and use of “deepfakes” for cybercrime. Deepfakes are highly realistic, lifelike reproductions of human faces or voices created using AI systems. In the first known case of cybercrimes involving deepfakes in 2019, the culprit used deepfake technology to mimic the voice of a company’s CEO and defraud an employee into paying significant sums of money (O’Donnell, 2019). Incidents like these could become more widespread over time as AI systems become more mainstream. Policymakers must be conscious about the risks and harms from deepfakes, especially if more services and activities will become digital post the COVID-19 pandemic.

Such cases can prove to be particularly problematic in a country like India with large populations of historically excluded user groups or first-time users of digital services who may have insufficient to no information required for credit decisioning.

4. Emerging regulatory responses

Regulators around the world and in India are attempting to create safeguards and mitigate harms by creating governance frameworks for AI (NITI Aayog, 2018; Dutton, 2018). Internationally, efforts in the European Union and OECD and countries like Singapore have been devising policies and strategies to govern the use of AI. India does not yet have a full-fledged framework for governing AI. However, some recent policy developments in India like the Discussion Paper on the National Strategy for Artificial Intelligence 2018 by NITI Aayog suggest movements towards devising policies on AI. Similarly, the Personal Data Protection Bill, 2019 will allow the future data protection regulators to set up an innovation sandbox for the growth of technologies like AI. Financial sector regulators are releasing sector-specific policies related to AI. The Reserve Bank of India (RBI), Securities and Exchange Board of India (SEBI) and the Insurance Regulatory and Development Authority of India (IRDAI) have released sandboxes to test financial services based on AI. In addition to the sandbox, the RBI has created a special unit to study the use of AI in the interests of policymaking (Rebello, 2019; SEBI, 2019a; Reserve Bank of India, 2019; IRDAI, 2019; SEBI, 2019b).

These policy developments are important as they come in a time when many financial entities and FinTech providers are adopting algorithmic models for various purposes in the banking, insurance, mutual funds and securities markets. We will explore these policy developments, both in India and globally in future blogs on this topic.

___

Reference

Agarwal, M. (2019a, November 16). 10 Indian Startups With Unique Fintech Solutions That Use AI Algorithms And Models. Retrieved from Inc42: https://inc42.com/features/10-indian-startups-with-unique-fintech-solutions-that-use-ai-algorithms-and-models/

Agarwal, M. (2019b, December 12). CreditMate is using ML to solve debt collection and digital lending NPAs. Retrieved from Inc42: https://inc42.com/startups/creditmate-is-using-ml-to-solve-debt-collection-and-digital-lending-npas/

Barbaschow, A. (2019, July 24). Microsoft and the learnings from its failed Tay artificial intelligence bot. Retrieved from ZDNet: https://www.zdnet.com/article/microsoft-and-the-learnings-from-its-failed-tay-artificial-intelligence-bot/

Baruah, A. (2020, February 27). AI Applications in the Top 4 Indian Banks. Retrieved from Emerj: https://emerj.com/ai-sector-overviews/ai-applications-in-the-top-4-indian-banks/

Chen, A. (2018, July 26). IBM’s Watson gave unsafe recommendations for treating cancer. Retrieved from The Verge: https://www.theverge.com/2018/7/26/17619382/ibms-watson-cancer-ai-healthcare-science

Chitra, R. (2019, July 16). Banks use AI everywhere, from chatbots to validating cheques. Retrieved from The Times of India: https://timesofindia.indiatimes.com/business/india-business/banks-use-ai-everywhere-from-chatbots-to-validating-cheques/articleshow/70237317.cms

Chugh, B. (2019, September 19). Financial Regulation of Consumer-facing FinTech in India: Status Quo and Emerging Concerns. Retrieved from Dvara Research: https://dvararesearch.com/wp-content/uploads/2019/09/Financial-regulation-of-consumer-facing-fintech-in-India-status-quo-and-emerging-concerns.pdf

Chugh, B., & Kumar, N. (2017, November 7). Harms to Consumers in a Modular Financial System. Retrieved from Dvara Research: https://dvararesearch.com/2017/11/07/harms-to-consumers-in-a-modular-financial-system/

Dutton, T. (2018, June 29). An Overview of National AI Strategies. Retrieved from Medium: https://medium.com/politics-ai/an-overview-of-national-ai-strategies-2a70ec6edfd

Dvara Research. (2017, July 3). Insights from the “Digital Credit Roundtable” hosted by the Future of Finance Initiative. Retrieved from Dvara Research: https://dvararesearch.com/2017/07/03/insights-from-the-digital-credit-roundtable-hosted-by-the-future-of-finance-initiative/

Ericsson. (2019, June 19). Data usage per smartphone is the highest in India- Ericsson. Retrieved from Ericsson: https://www.ericsson.com/en/press-releases/2/2019/6/data-usage-per-smartphone-is-the-highest-in-india–ericsson

ETTelecom. (2020, February 1). Budget 2020: Nirmala Sitharaman says proliferation of AI, ML is on backdrop. Retrieved from ET Telecom: https://telecom.economictimes.indiatimes.com/news/nirmala-sitharaman-ai-ml-on-the-backdrop-of-union-budget-2020-21/73831627

Experian. (2019, May 22). 2019 State of Alternative Credit Data Whitepaper. Retrieved from Experian: https://www.experian.com/blogs/insights/2019/05/state-of-alternative-credit-data/

Financial Stability Board. (2017, November 1). Artificial intelligence and machine learning in financial services. Retrieved from Financial Stability Board: https://www.fsb.org/wp-content/uploads/P011117.pdf

Gillespie, T. (2014). The Relevance of Algorithms. Retrieved from Microsoft: https://www.microsoft.com/en-us/research/wp-content/uploads/2014/01/Gillespie_2014_The-Relevance-of-Algorithms.pdf

Independent High-Level Expert Group on Artificial Intelligence. (2019, April 8). Ethics Guidelines for Trustworthy AI. Retrieved from European Commission: https://ec.europa.eu/digital-single-market/en/news/ethics-guidelines-trustworthy-ai

IRDAI. (2019, August 22). Press Release: Regulatory Sandbox Approach. Retrieved from Insurance Regulatory and Development Authority of India: https://www.irdai.gov.in/ADMINCMS/cms/frmGeneral_Layout.aspx?page=PageNo3880

Kemp, K. (2017, August 22). Big Data, Financial Inclusion and Privacy for the Poor. Retrieved from Dvara Research: https://dvararesearch.com/2017/08/22/big-data-financial-inclusion-and-privacy-for-the-poor/

Marda, V. (2018, September 10). Artificial Intelligence Policy in India: A Framework for Engaging the Limits of Data-Driven Decision-Making. Retrieved from SSRN: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3240384

Mirjankar, R. (2020, January 19). 7 Ways Digital Disruption is shaping the Indian Banking Sector. Retrieved from Outlook Money: https://www.outlookindia.com/outlookmoney/banking/7-ways-digital-disruption-is-shaping-the-indian-banking-sector-4214

mPokket. (2018). FAQs. Retrieved from mPokket: https://mpokket.com/index.html#howitworks

Natrajan, S., & Nasiripour, S. (2019, November 10). Viral Tweet About Apple Card Leads to Goldman Sachs Probe. Retrieved from Bloomberg: https://www.bloomberg.com/news/articles/2019-11-09/viral-tweet-about-apple-card-leads-to-probe-into-goldman-sachs

NITI Aayog. (2018, June). National Strategy for Artificial Intelligence Discussion Paper. Retrieved from NITI Aayog: https://niti.gov.in/writereaddata/files/document_publication/NationalStrategy-for-AI-Discussion-Paper.pdf

O’Donnell, L. (2019, September 4). CEO ‘Deep Fake’ Swindles Company Out of $243K. Retrieved from Threatpost: https://threatpost.com/deep-fake-of-ceos-voice-swindles-company-out-of-243k/147982/

Rebello, J. (2019, August 27). New RBI unit to track blockchain and AI. Retrieved from ET Markets: https://economictimes.indiatimes.com/markets/stocks/news/new-rbi-unit-to-track-blockchain-and-ai/articleshow/65557685.cms?from=mdr

Reserve Bank of India. (2017, November 9). Directions on Managing Risks and Code of Conduct in Outsourcing of Financial Services by NBFCs. Retrieved from Reserve Bank of India: https://www.rbi.org.in/scripts/NotificationUser.aspx?Mode=0&Id=11160

Reserve Bank of India. (2019, August 13). Enabling Framework for Regulatory Sandbox. Retrieved from Reserve Bank of India: https://www.rbi.org.in/Scripts/PublicationReportDetails.aspx?UrlPage=&ID=938#2

Sarmah, H. (2019, July 22). 5 Most Popular AI-Powered Personal Finance Apps. Retrieved from Analytics India Magazine: https://analyticsindiamag.com/5-most-popular-ai-powered-personal-finance-apps/

SEBI. (2019a, May 9). Reporting for Artificial Intelligence (AI) and Machine Learning (ML) applications and systems offered and used by Mutual Funds. Retrieved from Securities and Exchange Board of India: https://www.sebi.gov.in/legal/circulars/may-2019/reporting-for-artificial-intelligence-ai-and-machine-learning-ml-applications-and-systems-offered-and-used-by-mutual-funds_42932.html

SEBI. (2019b, May 28). Discussion Paper on Framework for Regulatory Sandbox. Retrieved from Securities and Exchange Board of India: https://www.sebi.gov.in/reports/reports/may-2019/discussion-paper-on-framework-for-regulatory-sandbox_43128.html

Shubh Loans. (2019). About Us. Retrieved from Shubh Loans: https://www.shubhloans.com/about.html

Singh, A., Raghavan, M., & Chugh, B. (2019, April). Primer on Consumer Data Regulation. Retrieved from 4th Dvara Research Conference: https://dvararesearch.com/conference2019/wp-content/uploads/2019/04/Primer-on-Consumer-Data-Regulation.pdf

Surden, H. (2014). Machine Learning and Law. Retrieved from Colorado Law Scholarly Commons: https://scholar.law.colorado.edu/cgi/viewcontent.cgi?article=1088&context=articles

Taulli, T. (2019). Artificial Intelligence Basics: A Non-Technical Introduction. Monrovia: Apress.

Taylor, A. (2019, July 23). How Machine Learning Will Transform P2P Lending. Retrieved from Lending Times: https://lending-times.com/2019/07/23/how-machine-learning-will-transform-p2p-lending/

The Deloitte Centre for Regulatory Strategy. (2018). AI and risk management: innovating with confidence. Retrieved from Deloitte: https://www2.deloitte.com/content/dam/Deloitte/lu/Documents/risk/lu-ai-and-risk-management.pdf

Vincent, J. (2019, November 11). Apple’s credit card is being invesitgated for discriminating against women. Retrieved from The Verge: https://www.theverge.com/2019/11/11/20958953/apple-credit-card-gender-discrimination-algorithms-black-box-investigation

Vishav. (2019, June 28). AI is Gradually Revolutionaising the Fintech Space. Retrieved from Outlook Money: https://www.outlookindia.com/outlookmoney/technology/ai-is-gradually-revolutionising-the-fintech-space-3159

Walker, J. (2020, March 4). Artificial Intelligence Applications for Lending and Loan Management. Retrieved from Emerj: https://emerj.com/ai-sector-overviews/artificial-intelligence-applications-lending-loan-management/

WNS. (n.d.). How a Predictive Analytics-based Framework Helps Reduce Bad Debts in Utilities. Retrieved from http://www.wns.com/Portals/0/Documents/Whitepapers/WNS-WP-how-a-predictive-analytics-based-framework-helps-reduce-bad-debts-in-utilities.pdf

Yadav, R. (2020, January 21). Deep Dive: AI behind the rise of Capital Float in the lending space. Retrieved from Analytics India Magazine: https://analyticsindiamag.com/deep-dive-ai-behind-the-rise-of-capital-float-in-the-lending-space/

[1] An algorithm is a set of “encoded procedures for transforming input data into desired output, based on specific calculations” (Gillespie, 2014).

[2] The article names three other FinTech startups (Coverfox, RenewBuy and Mswipe) that use AI for non-credit purposes.

4 Responses

Thank you for this nice overview. I would love to see a deeper exploration of these use cases beyond claims made by the companies. What impact have they had on customer outcomes so far; be it serving a new category of customers, lowering costs or risks? Also how are the regulatory concerns different than those with credit scoring?

Thank you, Bindu. Our upcoming posts on regulation of AI in finance will benefit from these pointers. It will be a very exciting exercise to inquire into these questions as there appears to be very little information that is available on them.

With respect to the regulatory concerns, some early reading suggests two broad concerns: (I) There is no regulatory framework that currently applies to credit-scorers who use alternate data and (II) AI-based credit-scoring uses a significantly higher number of data-points, which can increase the risk of harm.

We notice that while credit-scoring in India is governed under the Credit Information Companies Act 2007 (sections 19-22), alternate data collection and processing activities are unregulated in India. There is very little visibility on how alternate data is being collected, what the quality of the data is or how it is being processed. From our reading, it appears that different providers are using different methods to score creditworthiness using alternate data. The absence of any data protection regulations in this regard creates concerns about increased risk of harm to users whose data is processed.

We are very interested in understanding the consumer side of the story. We are currently exploring different methodologies to obtain insights as there appears to be little informaton in the public domain.

Hi,

Wonderful article on the use of AI/ML for digital credit in India. As rightly mentioned in the article, many digital credit firms in particular claim to use AI algorithms for risk-modelling and credit underwriting decisions.

In terms of risk modelling, the efficacy of these risk models and the resulting credit decisions are monitored using certain model validation techniques like Area under the Receiver Operating Characteristic curve (AUROC). K-S charts, Concordant – Discordant Ratio, etc. These validation results are very important for the fintech lenders in order to keep these models updated.

The following two papers analyzes the information content of the digital footprint – information that people leave online simply by accessing or registering on a website – for predicting consumer default.

(i) ‘On the Rise of FinTechs – Credit Scoring using Digital Footprints’ (https://rady.ucsd.edu/docs/seminars/puri_manju-paper.pdf)

(ii) Financial Inclusion and Alternate Credit Scoring for the Millennials: Role of Big Data and Machine Learning in Fintech (https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3507827) – Indian context

These are able to demonstrate that even simple, easily accessible variables from the digital footprint match the information content of credit bureau scores – the reason why fintechs are very comfortable lending to the underserved inspite of no credit bureau scores.

Thank you for the feedback and for sharing these resources, Mr Rathi. They will definitely be helpful in our work.